|

Guest OS Customization with Personalization Script To better handle Replication Assisted vMotion (RAV) migration for VMs with high disk churn, the HCX-IX appliance memory requirement is increased 3 GB to 6 GB.

HCX Interconnect (HCX-IX) Appliance Memory With HCX 4.8.0, support for 32-bit guest operating systems for OSAM has been removed. Remove Support for 32-bit Guest Operating Systems HCX OS Assisted Migration now supports these additional Guest Operating Systems: RHEL 8.7 and RHEL 8.8. Please observe all vSphere migration maximums when increasing HCX scale within a cluster. To migrate workloads using HCX, see Migrating Virtual Machines with HCX.Ī scaled-out HCX 4.8 deployment increases the potential for exceeding vSphere maximums for vMotion migration limits. If no Service Mesh is selected, HCX determines the Service Mesh to use for the migration. You might choose a specific Service Mesh to use for a migration based on the parameters or resources associated with that Service Mesh configuration, or to manually load balance operations across the cluster. You can now explicitly select the Service Mesh during a migration operation. The new scale out patterns help mitigate the concurrency limits for Replication Assisted vMotion and HCX vMotion. Single and multi-cluster architectures can now leverage multiple meshes to scale transfer beyond the single IX transfer limitations. We’ve improved scaling options in HCX 4.8 by removing the notion of clusters as the limiting factor for HCX migration architectures. Single-Cluster Multi-Mesh Scale Out (Selectable Mesh)

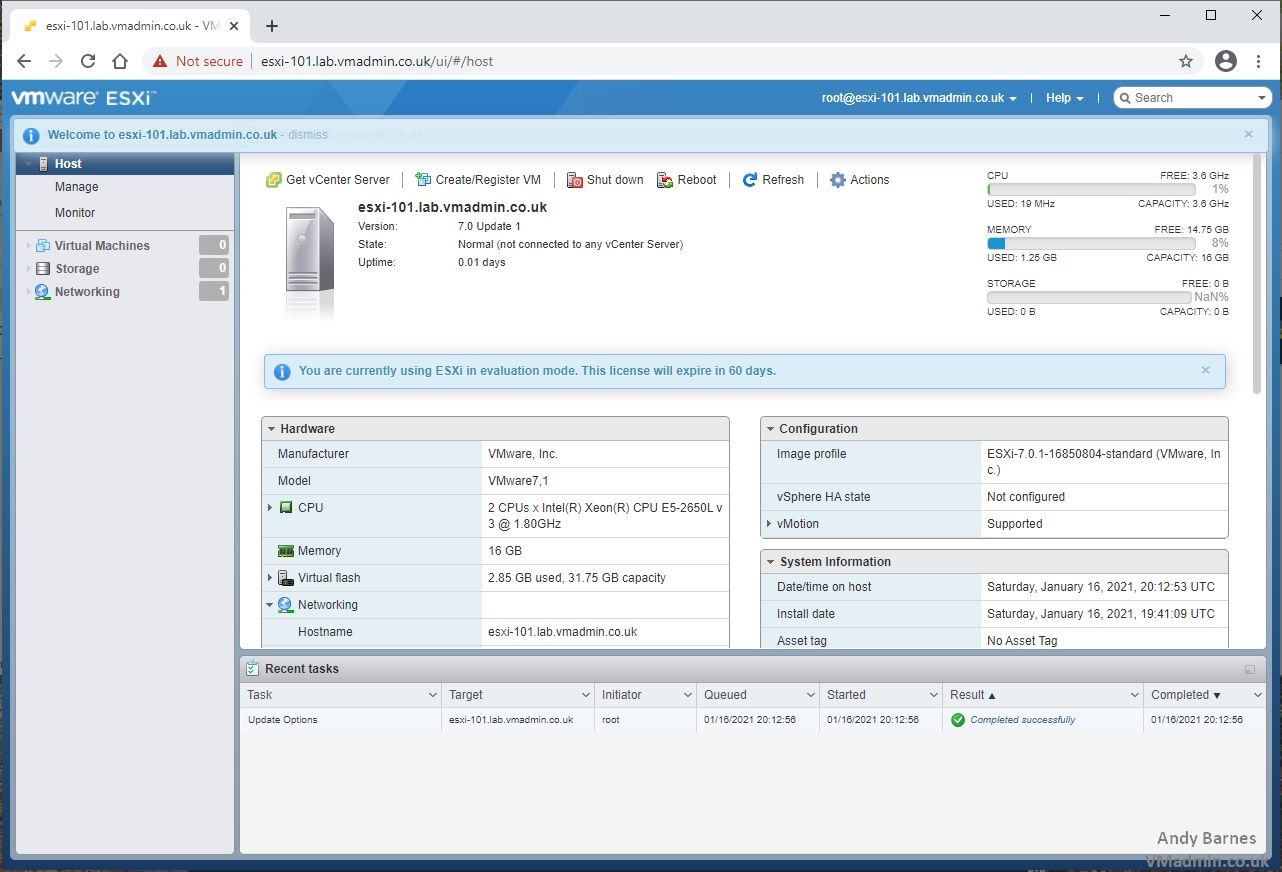

VMware HCX 4.8.0 is a minor release that provides new features, interoperability enhancements, usability enhancements, security enhancements and updates, and resolved issues. While the feature has completed its entire development cycle and it is fully supported in production, due to strong dependencies on the deployment environment, thorough validation and qualification is recommended before activating it. The following resources provide information related to HCX deployments:įor information regarding VMware product compatibility by version, see Product Interoperability Matrix.įor appliance limit information, see VMware Configurations Maximums.įor more information on where you can view port and protocol information for various VMware products in a single dashboard and to export an offline copy of the selected data, see VMware Ports and Protocols.įor information about General Availability (GA), End of Support (EoS), End of Technical Guidance (EoTG) for VMware software, see VMware Product Lifecycle Matrix.Įarly Adoption (EA) denotes an initial release phase of a critical service feature with limited field exposure. Now I understand at least why the GNS3 VM in GNS3 remains grey (it's because I have no reachability between my PC/host and the VM inside VMware).For HCX deployment requirements, see Preparing for HCX Installation.

The next time I booted the VM, I saw that IP, but I can't ping it from a DOS/CMD prompt. I also noticed the VM came up with an IP not in my local host's subnet, so I reserved an IP in my home Linksys router and configured that IP in the VM. I also decdided to uninstall GNS3 completely (v.2.2.6), rebooted and installed GNS3 v2.2.7 with no change (and that's with my FW and antivirus disabled still). I disabled my Windows FW and my antivirus software (MalwareBytes).

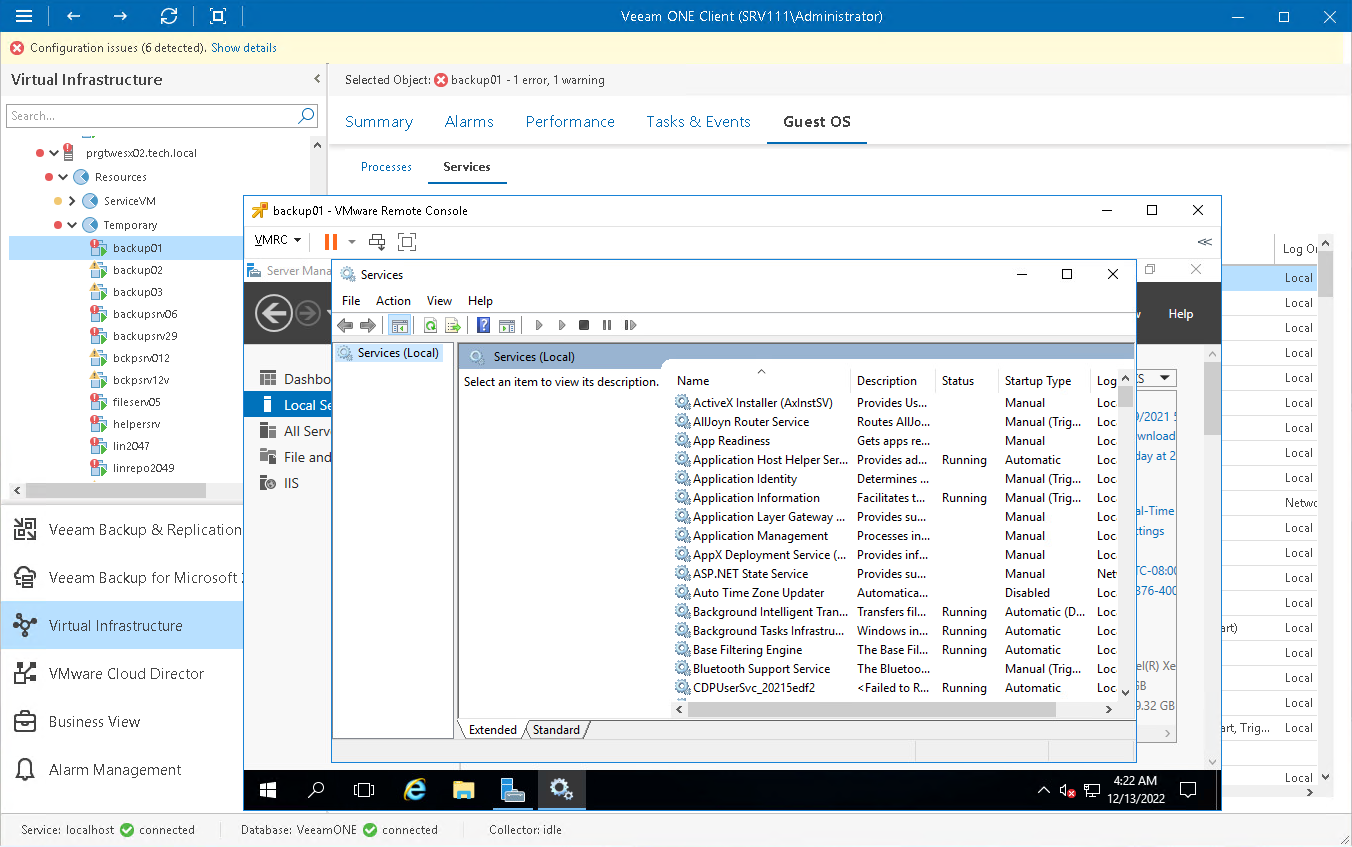

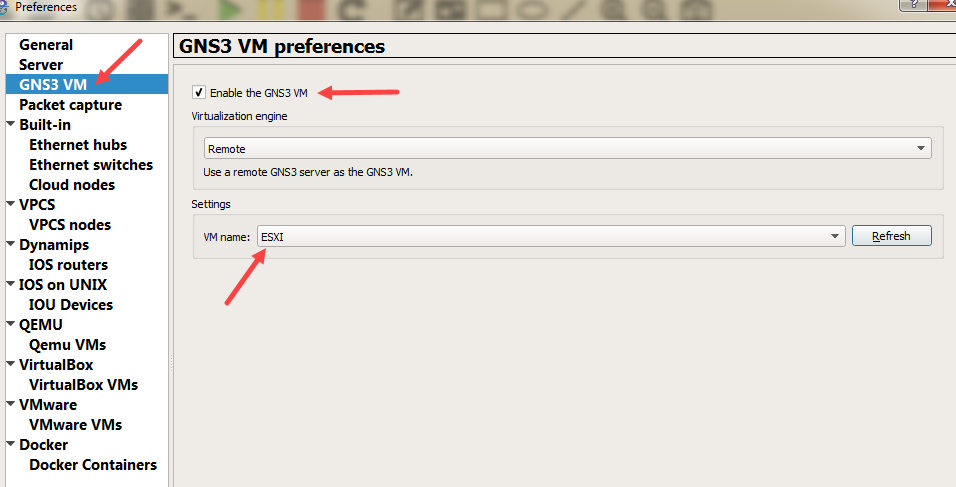

Within the VMWare software, the VM itself is running and it boots up to the screen that shows a number of options: In GNS3, under Preferences, I have "Enable the GNS3 VM" checked, the virtualization engine is VMware Workstation and the VM Name dropdown shows GNS3 VM. I also see the GNS3 VM LED, but it's grey. I see the one green icon that shows the CPU and RAM usage. I'm having an issue getting the GNS3 VM icon to turn green. Hello, I'm trying to tinker with Ansible and trying to get the VMWare VM working within the GNS3 app.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed